With contemporary advances, the tech trade is leaving the confines of slender synthetic intelligence (AI) and coming into a twilight zone, an ill-defined space between slender and common AI.

So far, all of the functions attributed to gadget finding out and AI were within the class of slender AI. Regardless of how refined – from insurance coverage score to fraud detection to production high quality regulate and aerial dogfights and even assisting with nuclear fission analysis – each and every set of rules has handiest been ready to satisfy a unmarried objective. This implies a few issues: 1) an set of rules designed to do something (say, establish gadgets) can’t be used for the rest (play a online game, as an example), and a pair of) the rest one set of rules “learns” can’t be successfully transferred to some other set of rules designed to satisfy a distinct particular objective. For instance, AlphaGO, the set of rules that outperformed the human international champion on the recreation of Cross, can not play different video games, in spite of the ones video games being a lot more effective.

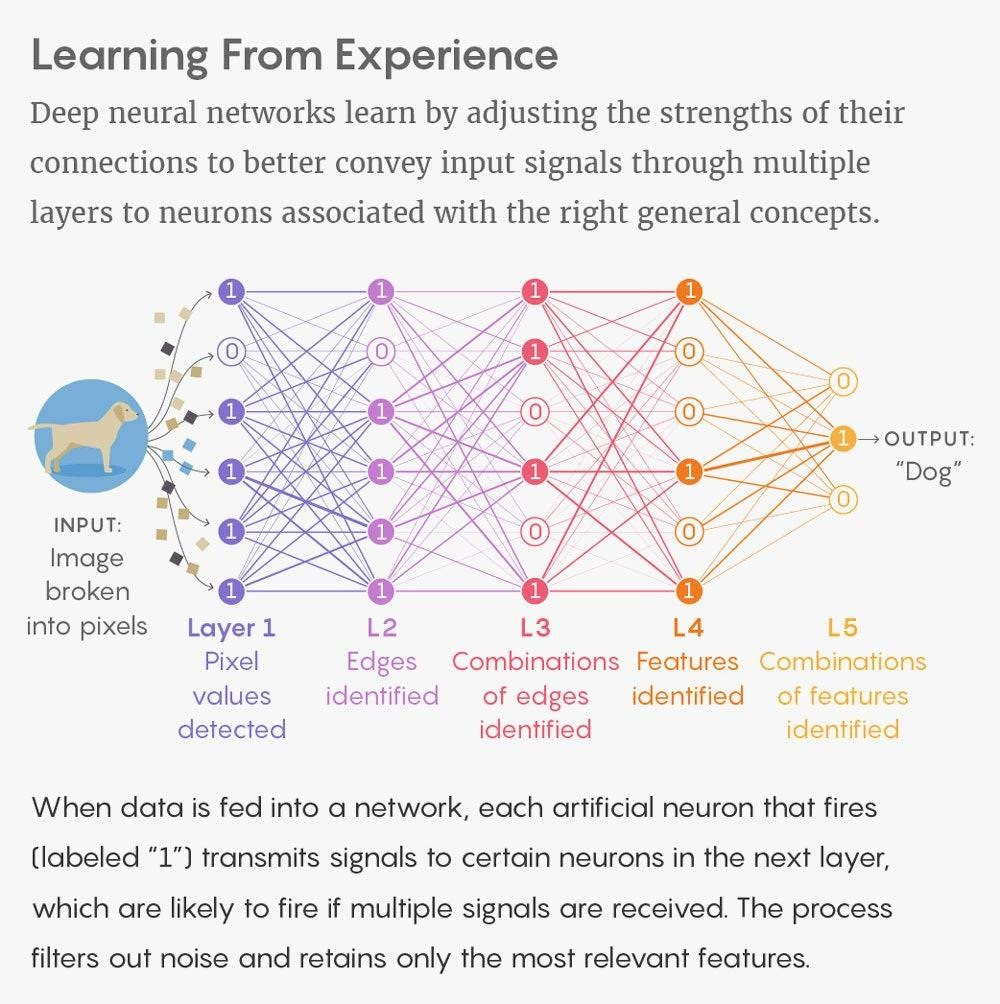

Most of the main examples of AI these days use deep finding out fashions applied the use of synthetic neural networks. Via emulating attached mind neurons, those networks run on graphics processing gadgets (GPUs) – very complex microprocessors designed to run masses or hundreds of computing operations in parallel, hundreds of thousands of occasions each 2nd. The a large number of layers within the neural community are supposed to emulate synapses, reflecting the collection of parameters that the set of rules will have to evaluation. Huge neural networks these days could have 10 billion parameters. The style purposes simulate the mind, cascading data from layer-to-layer within the community – each and every layer comparing some other parameter – to refine the algorithmic output. For instance, in symbol processing, decrease layers would possibly establish edges, whilst upper layers would possibly establish the ideas related to a human, reminiscent of digits or faces.

(Above: Deep Finding out Neural Networks. Supply: Lucy Studying in Quanta Mag.)

Whilst it’s conceivable to additional boost up those calculations and upload extra layers within the neural community to house extra refined duties, there are speedy coming near constraints in computing energy and power intake that restrict how a lot additional the present paradigm can run. Those limits may result in some other “AI iciness,” the place expectancies of the generation fail to reside as much as the hype, thus decreasing implementation and long run funding. This has took place two times within the historical past of AI – within the 1980s and 1990s – and required a few years each and every time to triumph over, looking ahead to advances in methodology or computing functions.

Averting some other AI iciness would require further computing energy, possibly from processors specialised for AI purposes which might be in construction and anticipated to be more practical and environment friendly than current-generation GPUs whilst lowering power intake. Dozens of businesses are running on new processor designs, designed to hurry the algorithms wanted for AI whilst minimizing or getting rid of circuitry that will fortify different makes use of. Otherwise to perhaps keep away from an AI iciness calls for a paradigm shift, going past the present deep finding out/neural community style. Larger computing energy and/or a paradigm shift may result in a transfer past slender AI in opposition to “common AI,” sometimes called synthetic common intelligence (AGI).

Are we transferring?

In contrast to slender AI algorithms, wisdom received through common AI will also be shared and retained amongst machine elements. In a common AI style, the set of rules that may beat the sector’s very best at Alpha Cross would have the ability to be informed chess or another recreation. AGI is conceived as a usually clever machine that may act and assume just like people, albeit on the pace of the quickest pc programs.

So far there are not any examples of an AGI machine, and maximum imagine there may be nonetheless a protracted technique to this threshold. Previous this 12 months, Geoffrey Hinton, the College of Toronto professor who’s a pioneer of deep finding out, famous: “There are a trillion synapses in a cubic centimeter of the mind. If there may be this sort of factor as common AI, [the system] would almost certainly require a trillion synapses.”

Nonetheless, there are mavens who imagine the trade is at a turning level, transferring from slender AI to AGI. Without a doubt, too, there are those that declare we’re already seeing an early instance of an AGI machine within the not too long ago introduced GPT-Three herbal language processing (NLP) neural community. Whilst NLP programs are usually educated on a big corpus of textual content (that is the supervised finding out manner that calls for each and every piece of information to be classified), advances towards AGI would require progressed unsupervised finding out, the place AI will get uncovered to a whole lot of unlabeled information and will have to determine the entirety else itself. That is what GPT-Three does; it will probably be informed from any textual content.

GPT-Three “learns” in keeping with patterns it discovers in information gleaned from the web, from Reddit posts to Wikipedia to fan fiction and different assets. In line with that finding out, GPT-Three is in a position to many various duties with out a further coaching, ready to provide compelling narratives, generate pc code, autocomplete pictures, translate between languages, and carry out math calculations, amongst different feats, together with some its creators didn’t plan. This obvious multifunctional capacity does now not sound just like the definition of slender AI. Certainly, it’s a lot more common in serve as.

With 175 billion parameters, the style is going well past the 10 billion in essentially the most complex neural networks, and a ways past the 1.five billion in its predecessor, GPT-2. That is greater than a 10x build up in style complexity in simply over a 12 months. Arguably, that is the biggest neural community but created and significantly nearer to the one-trillion degree instructed through Hinton for AGI. GPT-Three demonstrates that what passes for intelligence is also a serve as of computational complexity, that it arises in keeping with the collection of synapses. As Hinton suggests, when AI programs turn into related in dimension to human brains, they’ll really well turn into as clever as folks. That degree is also reached faster than anticipated if stories of coming neural networks with a trillion parameters are true.

The in-between

So is GPT-Three the primary instance of an AGI machine? That is controversial, however the consensus is that it’s not AGI. Nonetheless, it presentations that pouring extra information and extra computing time and tool into the deep finding out paradigm can result in astonishing effects. The truth that GPT-Three is even worthy of an “is that this AGI?” dialog issues to one thing vital: It alerts a step-change in AI construction.

That is putting, particularly for the reason that consensus of a number of surveys of AI mavens suggests AGI continues to be many years into the longer term. If not anything else, GPT-Three tells us there’s a center floor between slender and common AI. It’s my trust that GPT-Three does now not completely are compatible the definition of both slender AI or common AI. As a substitute, it presentations that we’ve got complex right into a twilight zone. Thus, GPT-Three is an instance of what I’m calling “transitional AI.”

This transition may remaining only some years, or it would remaining many years. The previous is conceivable if advances in new AI chip designs transfer briefly and intelligence does certainly get up from complexity. Even with out that, AI construction is shifting unexpectedly, evidenced through nonetheless extra breakthroughs with driverless vans and independent fighter jets.

There’s additionally nonetheless substantial debate about whether or not or now not attaining common AI is a superb factor. As with each complex generation, AI can be utilized to resolve issues or for nefarious functions. AGI may result in a extra utopian international — or to bigger dystopia. Odds are it’s going to be each, and it seems to be to reach a lot faster than anticipated.

Gary Grossman is the Senior VP of Generation Apply at Edelman and World Lead of the Edelman AI Middle of Excellence.