Facial reputation methods are a formidable AI innovation that completely show off The First Regulation of Generation: “era is neither excellent nor dangerous, neither is it impartial.” On one hand, law-enforcement businesses declare that facial reputation is helping to successfully battle crime and establish suspects. Alternatively, civil rights teams such because the American Civil Liberties Union have lengthy maintained that unchecked facial reputation capacity within the fingers of law-enforcement businesses allows mass surveillance and items a novel danger to privateness.

Analysis has additionally proven that even mature facial reputation methods have vital racial and gender biases; this is, they have a tendency to accomplish poorly when figuring out ladies and other folks of colour. In 2018, a researcher at MIT confirmed many best picture classifiers misclassify lighter-skinned male faces with error charges of zero.eight% yet misclassify darker-skinned women with error charges as top as 34.7%. Extra lately, the ACLU of Michigan filed a grievance in what is assumed to be the primary identified case in the USA of a wrongful arrest on account of a false facial reputation fit. Those biases could make facial reputation era specifically destructive within the context of law-enforcement.

One instance that has gained consideration lately is “Depixelizer.”

The mission makes use of a formidable AI methodology referred to as a Generative Opposed Community (GAN) to reconstruct blurred or pixelated pictures; alternatively, system studying researchers on Twitter discovered that after Depixelizer is given pixelated pictures of non-white faces, it reconstructs the ones faces to appear white. As an example, researchers discovered it reconstructed former President Barack Obama as a white guy and Consultant Alexandria Ocasio-Cortez as a white girl.

A picture of @BarackObama getting upsampled right into a white man is floating round as it illustrates racial bias in #MachineLearning. Simply for those who suppose it is not actual, it’s, I were given the code running in the community. This is me, and here’s @AOC. percent.twitter.com/kvL3pwwWe1

— Robert Osazuwa Ness (@osazuwa) June 20, 2020

Whilst the writer of the mission most definitely didn’t intend to succeed in this result, it most probably happened since the type used to be skilled on a skewed dataset that lacked variety of pictures, or possibly for different causes explicit to GANs. Regardless of the reason, this example illustrates how tough it may be to create a correct, independent facial reputation classifier with out particularly attempting.

Fighting the abuse of facial reputation methods

Recently, there are 3 major techniques to safeguard the general public passion from abusive use of facial reputation methods.

First, at a criminal stage, governments can put into effect regulation to keep an eye on how facial reputation era is used. Recently, there is not any US federal legislation or law referring to the usage of facial reputation via legislation enforcement. Many native governments are passing regulations that both utterly ban or closely keep an eye on the usage of facial reputation methods via legislation enforcement, alternatively, this growth is sluggish and might lead to a patchwork of differing laws.

2nd, at a company stage, corporations can take a stand. Tech giants are these days comparing the consequences in their facial reputation era. In accordance with the new momentum of the Black Lives Topic motion, IBM has stopped construction of latest facial reputation era, and Amazon and Microsoft have briefly paused their collaborations with legislation enforcement businesses. Alternatively, facial reputation isn’t a website restricted to huge tech companies anymore. Many facial reputation methods are to be had within the open-source domain names and various smaller tech startups are desperate to fill any hole available in the market. For now, newly-enacted privateness regulations just like the California Shopper Privateness Act (CCPA) don’t seem to offer ok protection in opposition to such corporations. It continues to be observed whether or not long term interpretations of CCPA (and different new state regulations) will ramp up criminal protections in opposition to questionable assortment and use of such facial information.

Finally, other folks at a person stage can try to take issues into their very own fingers and take steps to evade or confuse video surveillance methods. Plenty of equipment, together with glasses, make-up, and t-shirts are being created and advertised as defenses in opposition to facial reputation instrument. A few of these equipment, alternatively, make the individual dressed in them extra conspicuous. They may additionally now not be dependable or sensible. Even though they labored completely, it isn’t conceivable for other folks to have them on repeatedly, and law-enforcement officials can nonetheless ask folks to take away them.

What is wanted is an answer that permits other folks to dam AI from performing on their very own faces. Since privacy-encroaching facial reputation corporations depend on social media platforms to scrape and gather person facial information, we envision including a “DO NOT TRACK ME” (DNT-ME) flag to pictures uploaded to social networking and image-hosting platforms. When platforms see a picture uploaded with this flag, they admire it via including opposed perturbations to the picture sooner than making it to be had to the general public for obtain or scraping.

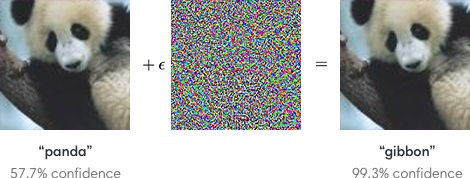

Facial reputation, like many AI methods, is susceptible to small-but-targeted perturbations which, when added to a picture, power a misclassification. Including opposed perturbations to facial reputation methods can prevent them from linking two other pictures of the similar person1. In contrast to bodily equipment, those virtual perturbations are just about invisible to the human eye and deal with a picture’s authentic visible look.

(Above: Opposed perturbations from the unique paper via Goodfellow et al.)

This means of DO NOT TRACK ME for pictures is comparable to the DO NOT TRACK (DNT) means within the context of web-browsing, which is dependent upon web pages to honor requests. Similar to browser DNT, the good fortune and effectiveness of this measure would depend at the willingness of collaborating platforms to endorse and put into effect the process – thus demonstrating their dedication to protective person privateness. DO NOT TRACK ME would succeed in the next:

Save you abuse: Some facial reputation corporations scrape social networks to be able to gather huge amounts of facial information, hyperlink them to folks, and supply unvetted monitoring services and products to legislation enforcement. Social networking platforms that undertake DNT-ME will be capable to block such corporations from abusing the platform and shield person privateness.

Combine seamlessly: Platforms that undertake DNT-ME will nonetheless obtain blank person pictures for their very own AI-related duties. Given the particular houses of opposed perturbations, they’re going to now not be noticeable to customers and won’t impact person enjoy of the platform negatively.

Inspire long-term adoption: In idea, customers may introduce their very own opposed perturbations slightly than depending on social networking platforms to do it for them. Alternatively, perturbations created in a “black-box” means are noticeable and are prone to wreck the capability of the picture for the platform itself. Ultimately, a black-box means is prone to both be dropped via the person or antagonize the platforms. DNT-ME adoption via social networking platforms makes it more uncomplicated to create perturbations that serve each the person and the platform.

Set precedent for different use instances: As has been the case with different privateness abuses, inactiveness via tech companies to include abuses on their platforms has resulted in robust, and possibly over-reaching, executive law. Lately, many tech corporations have taken proactive steps to forestall their platforms from getting used for mass-surveillance. As an example, Sign lately added a filter out to blur any face shared the use of its messaging platform, and Zoom now supplies end-to-end encryption on video calls. We consider DNT-ME items any other alternative for tech corporations to make sure the era they increase respects person selection and isn’t used to hurt other folks.

It’s essential to notice, alternatively, that despite the fact that DNT-ME can be a super get started, it handiest addresses a part of the issue. Whilst impartial researchers can audit facial reputation methods advanced via corporations, there is not any mechanism for publicly auditing methods advanced throughout the executive. That is regarding making an allowance for those methods are utilized in such essential instances as immigration, customs enforcement, courtroom and bail methods, and legislation enforcement. It’s subsequently completely important that mechanisms be installed position to permit outdoor researchers to test those methods for racial and gender bias, in addition to different issues that experience but to be came upon.

It’s the tech neighborhood’s duty to keep away from hurt thru era, yet we will have to additionally actively create methods that restore hurt brought about via era. We will have to be considering outdoor the field about techniques we will make stronger person privateness and safety, and meet these days’s demanding situations.

Saurabh Shintre and Daniel Kats are Senior Researchers at NortonLifeLock Labs.