Creators of the 80 Million Tiny Photographs information set from MIT and NYU took the gathering offline this week, apologized, and requested different researchers to chorus from the usage of the knowledge set and delete any present copies. The inside track was once shared Monday in a letter via MIT professors Invoice Freeman and Antonio Torralba and NYU professor Rob Fergus printed at the MIT CSAIL web page.

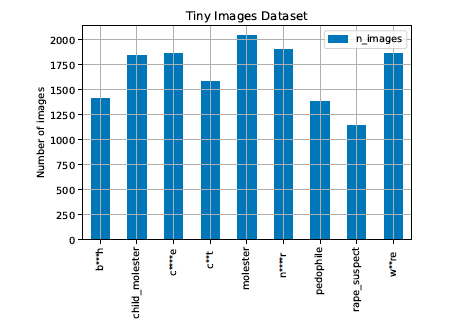

Offered in 2006 and containing footage scraped from web serps, 80 Million Tiny Photographs was once just lately discovered to include a spread of racist, sexist, and differently offensive labels reminiscent of just about 2,000 pictures categorised with the N-word, and labels like “rape suspect” and “kid molester.” The knowledge set additionally contained pornographic content material like non-consensual footage taken up girls’s skirts. Creators of the 79.three million-image information set mentioned it was once too huge and its 32 x 32 pictures too small, making visible inspection of the knowledge set’s whole contents tough. In line with Google Student, 80 Million Tiny Photographs has been cited extra 1,700 instances.

Above: Offensive labels discovered within the 80 Million Tiny Photographs information set

“Biases, offensive and prejudicial pictures, and derogatory terminology alienates crucial a part of our neighborhood — exactly those who we’re making efforts to incorporate,” the professors wrote in a joint letter. “It additionally contributes to destructive biases in AI techniques skilled on such information. Moreover, the presence of such prejudicial pictures hurts efforts to foster a tradition of inclusivity within the pc imaginative and prescient neighborhood. That is extraordinarily unlucky and runs counter to the values that we try to uphold.”

The trio of professors say the knowledge set’s shortcoming have been delivered to their consideration via an research and audit printed overdue remaining month (PDF) via College of Dublin Ph.D. pupil Abeba Birhane and Carnegie Mellon College Ph.D. pupil Vinay Prabhu. The authors say their overview is the primary identified critique of 80 Million Tiny Photographs.

Each the paper authors and the 80 Million Tiny Photographs creators say a part of the issue comes from automatic information assortment and nouns from the WordNet information set for semantic hierarchy. Sooner than the knowledge set was once taken offline, the coauthors instructed the creators of 80 Million Tiny Photographs do like ImageNet creators did and assess labels used within the folks class of the knowledge set. The paper reveals that large-scale picture information units erode privateness and may have a disproportionately destructive have an effect on on girls, racial and ethnic minorities, and communities on the margin of society.

Birhane and Prabhu assert that the pc imaginative and prescient neighborhood will have to start having extra conversations concerning the moral use of large-scale picture information units now partially because of the rising availability of image-scraping gear and opposite picture seek era. Mentioning earlier paintings just like the Excavating AI research of ImageNet, the research of large-scale picture information units displays that it’s now not only a topic of knowledge, however a question of a tradition in academia and trade that reveals it appropriate to create large-scale information units with out the consent of members “beneath the guise of anonymization.”

“[W]e posit that the deeper issues are rooted within the wider structural traditions, incentives, and discourse of a box that treats moral problems as an afterthought. A box the place within the wild is continuously a euphemism for with out consent. We’re up in opposition to a gadget that has assuredly mastered ethics buying groceries, ethics bluewashing, ethics lobbying, ethics dumping, and ethics shirking,” the paper states.

To create extra moral large-scale picture information units, Birhane and Prabhu counsel:

- Blur the faces of folks in information units

- Don’t use Ingenious Commons approved subject matter

- Accumulate imagery with transparent consent from information set members

- Come with an information set audit card with large-scale picture information units, corresponding to the style playing cards Google AI makes use of and the datasheets for information units Microsoft Analysis proposed

The paintings accommodates Birhane’s earlier paintings on relational ethics, which implies that the creators of device finding out techniques will have to start their paintings via talking with the folks maximum suffering from device finding out techniques, and that ideas of bias, equity, and justice are transferring objectives.

ImageNet was once offered at CVPR in 2009 and is broadly regarded as necessary to the development of pc imaginative and prescient and device finding out. While up to now one of the greatest information units might be counted within the tens of hundreds, ImageNet comprises greater than 14 million pictures. The ImageNet Huge Scale Visible Reputation Problem ran from 2010 to 2017 and resulted in the release of a number of startups like Clarifai and MetaMind, a corporate Salesforce got in 2017. In line with Google Student, ImageNet has been cited just about 17,000 instances.

As a part of a sequence of adjustments detailed in December 2019, ImageNet creators together with lead creator Jia Deng and Dr. Fei-Fei Li discovered that 1,593 of the two,832 folks classes within the information set doubtlessly include offensive labels, which they mentioned they plan to take away.

“We certainly have a good time ImageNet’s fulfillment and acknowledge the creators’ efforts to grapple with some moral questions. Nevertheless, ImageNet in addition to different huge picture datasets stay tough,” the Birhane and Prabhu paper reads.