January 18, 2021

Flavio Bonomi, Board Era Consultant, Lynx Tool Applied sciences

Throughout a variety of industries, and in particular within the commercial automation vertical, there may be large settlement that the deployment of recent computing sources with cloud local fashions of device lifecycle control will turn out to be ever extra pervasive. Putting virtualized computing sources closer to the place more than one streams of information are created is easily established. It’s the trail to handle device latency, privateness, price and resiliency demanding situations that a natural cloud computing manner can’t cope with. This paradigm shift was once initiated at Cisco Methods round 2010, beneath the label “fog computing” and gradually morphed into what is referred to now as “edge computing”.

The necessities of undertaking severe commercial programs

That mentioned, the whole doable of this change in each computing and information analytics is a long way from being learned. The undertaking severe necessities are a lot more stringent than what the cloud local paradigms can ship. That is very true as a result of undertaking severe programs have 4 particular necessities

- Heterogeneous hardware – Standard commercial automation settings have other architectures, x86, Arm, as smartly quite a few compute configurations at the flooring

- Safety – The safety necessities and their mitigations range from the tool to tool and want to be treated moderately

- Innovation – Whilst one of the crucial commercial programs can proceed with the legacy paradigm of ultimate the similar for over a decade, lots of the commercial global now moreover calls for trendy information analytics and tracking of programs of their installations

- Knowledge privateness – as in different spaces of IT, information permission control is more and more advanced inside of attached machines and must be controlled proper from the origination of the information

- Actual-time and determinism – the real-time determinism supplied via controllers stays severe to the security and safety of the operation.

For those causes, the marketplace is looking for what Lynx Tool Applied sciences refers to because the “undertaking severe edge”. This idea is born out of the incorporation of necessities standard of embedded computing (safety, real-time and protected, deterministic behaviors), into trendy networked, virtualized, containerized lifecycle control and information and intelligence wealthy computing.

The function of undertaking severe edge

With out an absolutely manifested undertaking severe edge we can no longer have the ability to cope with the numerous ache issues characterizing the present commercial digital infrastructure. Specifically, we can no longer have the ability to securely consolidate, orchestrate and enrich with the end result of information analytics and synthetic intelligence (AI) the numerous poorly attached, fragmented and getting older subsystems controlling nowadays’s commercial environments.

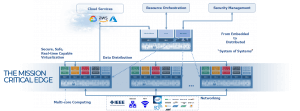

This large structure image under illustrates our imaginative and prescient for enabling this, as:

- Dispensed and interconnected, combined criticality succesful, virtualized multi-core computing nodes (device of programs)

- Networking fortify that incorporates conventional IT communications (e.g., Ethernet, WiFi) but in addition deterministic legacy box busses, shifting in opposition to IEEE time touchy networking (TSN), and private and non-private 4G/5G, additionally shifting in opposition to determinism

- Make stronger for information distribution inside of and throughout nodes, in line with usual middleware (OPC UA, MQTT, DDS, and extra) will even try in opposition to determinism (e.g., OPC UA over TSN)

- Dispensed nodes which need to be remotely controlled and device will likely be delivered and orchestrated as digital machines (VMs) and boxes, the fashion of recent cloud local microservices.

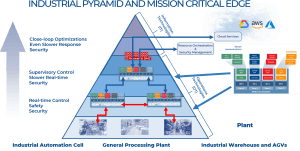

Lynx has recognized the evolution of the economic operational structure (the structure of the infrastructure at the commercial automation flooring) as one of the vital suitable objectives for the belief of the whole undertaking severe edge paradigm.

Paired with extra robust and scalable new multicore platforms, a undertaking severe edge computing manner may give a unified and uniform infrastructure, going from the mechanical device, throughout the commercial flooring, and into the telco edge and cloud, enabling a elementary decoupling between hardware and device. Programs, packaged as VM and, an increasing number of, as boxes, can also be lifecycle-managed and orchestrated throughout the entire layers of this infrastructure.

Integration into nowadays’s fragmented commercial environments

Many poorly attached, fragmented and getting older subsystems controlling nowadays’s bodily environments can also be successfully and securely consolidated, orchestrated and enriched with the end result of information analytics and synthetic intelligence.

The diagram above presentations how the infrastructure would glance when the undertaking severe edge is deployed, embedded into the operational applied sciences house of the manufacturing facility. There are a dispensed set of nodes, some very just about the plant, some a long way away. Successfully this is sort of a dispensed datacenter, but accommodates a much more heterogeneous, interconnected virtualized set of computing useful resource which is able to host the programs the place wanted and when wanted. Those will likely be deployed within the type of digital machines and boxes orchestrated from the cloud or in the neighborhood.

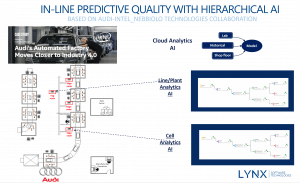

Let’s talk about a particular use case at an Audi production plant, extra in particular for the Audi A3. The Neckarsulm plant has 2,500 independent robots on its manufacturing line. Every robotic is supplied with a device of a few sort, from glue weapons to screwdrivers, and plays a particular job required to collect an Audi automotive.

Audi assembles as much as roughly 1,000 automobiles on a daily basis on the Neckarsulm manufacturing facility, and there are five,000 welds in every automobile. To make sure the standard of its welds, Audi plays handbook quality-control inspections. It’s unimaginable to manually investigate cross-check 1,000 vehicles on a daily basis, then again, so Audi makes use of the trade’s usual sampling manner, pulling one automobile off the road on a daily basis and the use of ultrasound probes to check the welding spots and document the standard of each and every spot. Sampling is expensive, labor-intensive and blunder inclined. So, the target was once to investigate cross-check five,000 welds consistent with automobile inline and infer the result of every weld inside of microseconds.

A machine-learning set of rules was once created and educated for accuracy via evaluating the predictions it generated to precise inspection information that Audi supplied. Remember the fact that on the edge there’s a wealthy set of information that may be accessed. The mechanical device studying fashion used information generated via the welding controllers, which confirmed electrical voltage and present curves throughout the welding operation. The information additionally incorporated different parameters reminiscent of configuration of the welds, the sorts of steel, and the well being of the electrodes.

Those fashions have been then deployed at two ranges, in the beginning on the line itself and likewise the mobile degree. The outcome was once that the programs have been ready to expect deficient welds earlier than they have been carried out. This has considerably raised the bar on the subject of high quality. Central to the luck of this workout was once the gathering and processing of information in relation to a undertaking severe procedure on the edge (ie: at the manufacturing line) fairly than within the cloud. Consequently, changes to the method may well be made in genuine time.

Harvesting the advantages of integration on the boundary between embedded and IT

There are a selection of technical spaces the place there nonetheless must be relatively numerous development. The focal point at Lynx is essentially round two spaces

- Turning in deterministic habits in multicore programs; As more than one programs are consolidated to perform on a unmarried multicore processor, the sharing of sources like reminiscence and I/O reason interference, which means that that making sure the habits of time-critical capability turns into problematic

- Turning in strict isolation for programs to verify prime ranges of device reliability and safety

There are a selection of different subjects that come with offering time-sensitive information control, edge analytics and networking capability for those advanced attached programs. For instance, what is going to be the fitting manner for deploying the orchestration and scheduling for those deterministic, time-sensitive programs?

In conclusion, the undertaking severe edge is right here. It’s beginning to notice the unique intent of fog computing. We’re beginning to harvest the good advantages from the genuine integration on the boundary between embedded era and data era. A lot more paintings is wanted, and this will likely take a village. This will likely want a large set of ecosystem companions to simplify how this era is brought to .

Flavio Bonomi is a board era guide for Lynx Tool Applied sciences, He’s a visionary, entrepreneur, and technologist who prospers on the boundary between implemented analysis and complex era commercialization. Bonomi is the co-founder and was once the primary CEO of Nebbiolo Applied sciences, a Silicon Valley startup excited about turning in the ability of fog computing to the economic automation marketplace and past. Up to now, he was once a Fellow, vp, and head of the complex structure and analysis group at Cisco Methods.

Flavio Bonomi is a board era guide for Lynx Tool Applied sciences, He’s a visionary, entrepreneur, and technologist who prospers on the boundary between implemented analysis and complex era commercialization. Bonomi is the co-founder and was once the primary CEO of Nebbiolo Applied sciences, a Silicon Valley startup excited about turning in the ability of fog computing to the economic automation marketplace and past. Up to now, he was once a Fellow, vp, and head of the complex structure and analysis group at Cisco Methods.