Other people be informed in a different way from neural networks. If a human comes again to a game after years away, they could be rusty however they’ll nonetheless consider a lot of what they realized many years in the past. A standard neural community will disregard the very last thing it used to be educated to do. Nearly all neural networks as of late be afflicted by this downside, known as “catastrophic forgetting.”

It’s the Achilles’ heel of device studying, OpenAI analysis scientist Jeff Clune informed VentureBeat, as it prevents device studying practitioners from “power studying,” the power to bear in mind earlier duties. However some methods can also be taught to bear in mind.

Prior to becoming a member of OpenAI closing month to steer its multi-agent workforce, Clune labored with researchers from Uber AI Labs and the College of Vermont. This week, they jointly shared ANML (A Neuromodulated Meta-Finding out set of rules) that is in a position to be informed 600 sequential duties with minimum catastrophic forgetting.

“That is reasonably unheard-of in device studying. To my wisdom, it’s the longest collection of duties that AI has been in a position to do experiments to and on the finish of it, it’s nonetheless beautiful just right at all of the duties that it noticed,” Clune stated. “I believe that those kinds of advances will likely be utilized in virtually each and every state of affairs the place we use AI. It’s going to simply make AI higher.”

Clune helped cofound Uber AI Labs in 2017, following the purchase of Geometric Intelligence, and is one in every of seven coauthors of a paper known as “Finding out to Frequently Be informed” printed Monday on arXiv.

Educating AI methods to be told and consider 1000’s of duties is what the paper coauthors name a long-standing grand problem of AI. Such methods can allow the advent of AI methods that may maintain and consider a spread of duties, and Clune believes AI like ANML is essential to attaining a sooner trail to the best problem: synthetic basic intelligence (AGI).

In some other paper Clune wrote ahead of becoming a member of OpenAI — a startup with billions in investment geared toward growing the arena’s first AGI — Clune argued a sooner trail to AGI can also be accomplished thru bettering meta-learning set of rules architectures, the algorithms themselves, and the automated era of coaching environments.

“In case you had a machine that used to be looking for architectures, growing higher and higher studying algorithms, and routinely growing its personal studying demanding situations and fixing them, after which happening to more difficult demanding situations … [If you] put the ones 3 pillars in combination … you will have what I name an “AI-generating set of rules.” That’s another trail to AGI that I believe will in the long run be sooner,” Clune informed VentureBeat.

ANML makes growth in meta-learning answers to an issue as a substitute of manually engineering answers. That is in step with a development towards looking for set of rules structure to seek out cutting-edge effects, as a substitute of hand coding algorithms from scratch.

Final week, an MIT Tech Evaluation article argued that OpenAI lacks a transparent plan for attaining AGI, as a few of its breakthroughs are the manufactured from computational assets and technical inventions advanced in different labs. The typical OpenAI worker, the item stated, believes it is going to take 15 years to achieve AGI.

An OpenAI spokesperson informed VentureBeat that Clune’s imaginative and prescient of AI-generating algorithms is in step with OpenAI’s analysis pursuits and with earlier paintings, like OpenAI’s type for a robot hand to unravel a Rubik’s Dice, however didn’t percentage an opinion on Clune’s concept a few sooner trail to AGI.

OpenAI additionally declined to respond to questions on whether or not it has a roadmap to AGI or remark at the MIT Tech Evaluation article, however stated that Clune, as head of the multi-agent workforce at OpenAI, will focal point on AI-generating algorithms, more than one interacting brokers, open-ended algorithms, automatically-generating coaching environments, and different variations of deep reinforcement studying.

“They employed me to pursue this imaginative and prescient they usually’re — numerous their paintings could be very aligned with this imaginative and prescient, they usually like the theory and employed me to stay running on it partially as it’s aligned with paintings they’ve printed,” Clune stated.

ANML, neuromodulation, and the human mind

ANML achieves cutting-edge power studying effects via meta-learning the parameters of a neurmodulation community. Neuromodulation is a procedure discovered within the human mind, the place neurons can inhibit or excite different neurons within the mind, together with inciting different neurons within the mind to be told.

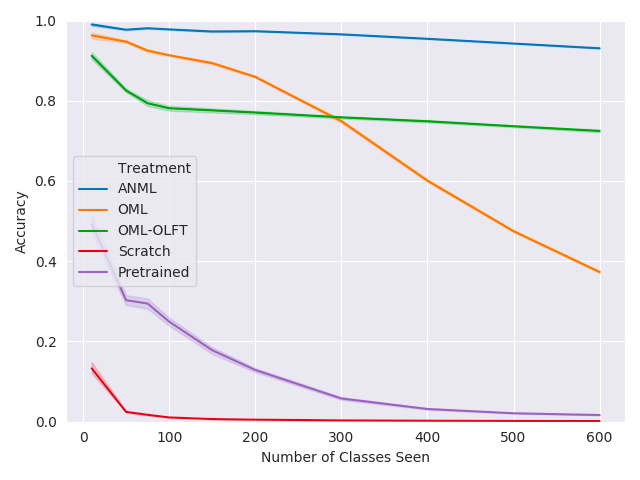

Above: Meta-test coaching classification accuracy

In his lab on the College of Wyoming, Clune and co-workers demonstrated that they may totally triumph over catastrophic forgetting, however just for smaller, more practical networks. ANML scales catastrophic forgetting relief to a deep studying type with 6 million parameters.

ANML additionally expands upon OML, a type that used to be offered closing yr via Martha White and Khurram Javed at NeurIPS 2019 and in a position to finishing as much as 200 duties with out catastrophic forgetting.

ANML differs from OML as a result of Clune stated his workforce discovered turning studying off and on isn’t sufficient by itself at scale, but in addition calls for modulating the activation of neurons.

“What we do on this paintings is we permit the community to have extra energy. The neuromodulatory community can more or less trade the activation development within the commonplace mind, if you are going to, the mind whose task it’s to do duties like journey a motorbike, play chess, or acknowledge photographs. It could actually trade that more or less job and say ‘I handiest need to listen from the chess-playing a part of your community presently,’ after which that not directly permits it to keep watch over the place studying occurs. As a result of if handiest the chess-playing a part of the community is energetic, then in line with stochastic gradient descent, which is the set of rules that you just use for all deep studying, then studying will handiest occur roughly in that community.”

In preliminary ANML paintings, the type makes use of laptop imaginative and prescient to acknowledge human handwriting. For subsequent steps, Clune stated he and others at Uber AI Labs and the College of Vermont will scale ANML to check out to perform extra complicated duties. Paintings on ANML is supported via DARPA’s Lifelong Finding out machines award.