A device finding out fashion’s efficiency is best as just right as the standard of the knowledge set on which it’s skilled, and within the area of self-driving cars, it’s essential this efficiency isn’t adversely impacted by means of mistakes. A troubling record from laptop imaginative and prescient startup Roboflow alleges that precisely this situation happened — in accordance to founder Brad Dwyer, an important bits of information have been neglected from a corpus used to coach self-driving automotive fashions.

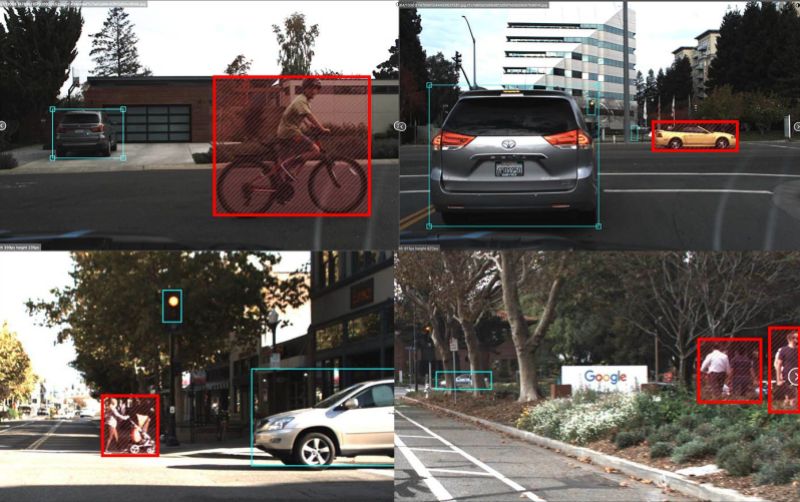

Dwyer writes that Udacity Dataset 2, which incorporates 15,000 pictures captured whilst riding in Mountain View and neighboring towns all the way through sunlight, has omissions. 1000’s of unlabeled cars, masses of unlabeled pedestrians, and dozens of unlabeled cyclists are found in kind of five,000 of the samples, or 33% (217 lack any annotations in any respect however in reality comprise automobiles, vehicles, boulevard lighting, or pedestrians). Worse are the circumstances of phantom annotations and duplicated bounding containers (the place “bounding field” refers to things of pastime), along with “vastly” outsized bounding containers.

It’s problematic taking into consideration that labels are what permit an AI machine to grasp the results of patterns (like when an individual steps in entrance of a automotive) and assessment long term occasions in keeping with that wisdom. Mislabeled or unlabeled pieces may just result in low accuracy and deficient decision-making in flip, which in a self-driving automotive can be a recipe for crisis.

Above: A number of instance pictures containing pedestrians that didn’t comprise any annotations within the authentic dataset.

Symbol Credit score: Roboflow

“Open supply datasets are nice, but when the general public goes to accept as true with our neighborhood with their protection we wish to do a greater activity of making sure the knowledge we’re sharing is whole and correct,” wrote Dwyer, who famous that 1000’s of scholars in Udacity’s self-driving engineering direction use Udacity Dataset 2 at the side of an open-source self-driving automotive mission. “If you happen to’re the usage of public datasets on your tasks, please do your due diligence and test their integrity ahead of the usage of them within the wild.”

It’s neatly understood that AI is susceptible to bias issues stemming from incomplete or skewed information units. For example, phrase embedding, a not unusual algorithmic coaching method that comes to linking phrases to vectors, unavoidably alternatives up — and at worst amplifies — prejudices implicit in supply textual content and discussion. Many facial popularity methods misidentify other people of colour extra ceaselessly than white other people. And Google Pictures as soon as infamously classified footage of darker-skinned other people as “gorillas.”

However underperforming AI may just inflict way more hurt if it’s put in the back of the wheel of a car, with the intention to talk. There hasn’t been a documented example of a self-driving automotive inflicting a collision, however they’re on public roads best in small numbers. That’s more likely to trade — as many as eight million driverless automobiles shall be added to the street in 2025, in line with advertising and marketing company ABI, and Analysis and Markets anticipates there shall be some 20 million self reliant automobiles in operation within the U.S. by means of 2030.

Above: Examples of mistakes (red-highlighted annotations have been lacking within the authentic dataset).

Symbol Credit score: Roboflow

If the ones hundreds of thousands of automobiles run improper AI fashions, the have an effect on may well be devastating, which might make a public already cautious of driverless cars extra skeptical. Two research — one printed by means of the Brookings Establishment and any other by means of the Advocates for Freeway and Auto Protection (AHAS) — discovered that a majority of American citizens aren’t satisfied of driverless automobiles’ protection. Greater than 60% of respondents to the Brookings ballot stated that they weren’t vulnerable to journey in self-driving automobiles, and virtually 70% of the ones surveyed by means of the AHAS expressed issues about sharing the street with them.

A method to the knowledge set downside would possibly lie in higher labeling practices. In step with the Udacity Dataset 2’s GitHub web page, crowd-sourced corpus annotation company Autti treated the labeling, the usage of a mixture of device finding out and human taskmasters. It’s unclear whether or not this manner would possibly have contributed to the mistakes — we’ve reached out to Autti for more info — however a stringent validation step would possibly’ve helped to highlight them.

For its phase, Roboflow tells Sophos’ Bare Safety that it plans to run experiments with the unique information set and the corporate’s fastened model of the knowledge set, which it’s made to be had in open supply, to peer how a lot of an issue it will had been for coaching quite a lot of fashion architectures. “Of the datasets I’ve checked out in different domain names (e.g. medication, animals, video games), this one stood out as being of in particular deficient high quality,” Dwyer instructed the newsletter. “I’d hope that the massive firms who’re in reality hanging automobiles at the highway are being a lot more rigorous with their information labeling, cleansing, and verification processes.”